MX IPTV in 2026 is best understood as IPTV delivery designed to work reliably for users who expect stable playback across modern devices and networks. If you found this page by searching “MX IPTV,” you’re usually looking for one of three things: consistent performance, clear device compatibility, and a setup that doesn’t fall apart during busy hours. This guide keeps everything DMCA-safe by focusing on IPTV as a technology and operations system—monitoring, uptime, scalability, and multi-device reliability—rather than content claims or lists.

Table of Contents

DMCA-safe clarity (short): IPTV is a delivery technology; legality depends on licensing and jurisdiction. This article does not provide bypass methods or any instructions intended to enable infringement.

What “MX IPTV” means in 2026 (and what it does not mean)

When people search “MX IPTV,” they typically mean: “I want IPTV playback that works consistently in my real setup.” That setup might be a home with multiple screens, an office environment, or a hospitality scenario where reliability matters more than anything else. In practice, users aren’t asking for “more content”—they’re asking for fewer problems: less buffering, faster start, and predictable behavior across devices.

A professional way to define MX IPTV in 2026 is:

- A delivery workflow (device + network + service operations)

- A reliability goal (stable start, stable playback, stable switching)

- A multi-device requirement (TV endpoint + mobile + browser + optional desktop control)

What this page does not do:

- No channel lists

- No entertainment brand references

- No “free/unlock” language

- No instructions for bypassing licensing or restrictions

Quote (reliability mindset): “If it’s not measurable, it’s not dependable.”

The real intent behind MX IPTV searches (fast map)

Most “MX IPTV” searches fall into predictable intent buckets. Mapping intent to a solution path is the fastest way to avoid wasted time and unreliable choices.

Intent → solution map (2026)

| What you want | What it really means | Best-fit direction | What to measure |

|---|---|---|---|

| “No buffering” | network stability + player resilience | improve delivery quality first | jitter, packet loss, rebuffering |

| “Fast playback” | quick startup + stable decode | choose stable endpoint + clean network | startup time, error frequency |

| “Works on all devices” | consistent experience across screens | multi-device design (not single device) | same behavior on TV + mobile |

| “Reliable for business” | predictability + supportability | managed endpoints + clear support flow | repeatable results, support clarity |

| “Hospitality-ready” | standardization at scale | inventory + controlled updates | uptime patterns, device consistency |

Key idea: If your goal is stable performance, you’re selecting a system, not just an app.

Best Good IPTV Boxes in 2026 — reframed safely (endpoints, not hype)

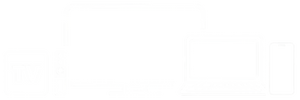

People often ask for the “best boxes” because they want reliability without complexity. The safest and most accurate way to discuss this is to treat boxes as endpoints that reduce variability. In many homes, an Android TV device works well as a dedicated endpoint because it’s designed for consistent living-room behavior: remote-friendly UI, predictable performance, and fewer background variables than a general-purpose computer.

At the same time, a desktop or laptop can still be valuable—not as the only screen, but as a control and diagnostic station (especially for power users and businesses). In 2026, the highest-trust approach is often a combination:

- Android TV endpoint for daily viewing stability

- Mobile device for quick Wi-Fi reality checks

- Browser/desktop for verification and troubleshooting consistency

This avoids the common failure mode where one unstable device becomes the “truth” for the whole system.

Endpoint scorecard (2026): what stays stable under real conditions

| Endpoint type | Stability potential | Maintenance predictability | Best for | Common weak point |

|---|---|---|---|---|

| Android TV endpoint | High | High | living-room reliability | weak Wi-Fi environments |

| Smart TV app endpoint | Medium | Medium | simple, single-screen use | limited diagnostics |

| Mobile endpoint | Medium | Medium | quick checks + secondary screen | Wi-Fi variability |

| Browser/desktop endpoint | High (when controlled) | Medium | diagnostics + verification | background load/updates |

Practical takeaway: A “best box” in 2026 is not about marketing. It’s about how reliably an endpoint behaves when the network is imperfect and the home is busy.

The reliability foundation: QoE vs QoS (what users feel vs what causes it)

If you want MX IPTV to “just work,” you need one simple mental model:

- QoE (Quality of Experience) = what you feel

- QoS (Quality of Service) = what the network delivers

Many people only look at bandwidth, but bandwidth is not the same as stability. IPTV delivery can fail on a fast connection if the connection has high jitter (delay variation) or intermittent packet loss. Those issues are exactly what cause “random buffering.”

QoE/QoS table (2026): the metrics that explain most playback problems

| Metric | Type | What it affects | What “bad” looks like in real life |

|---|---|---|---|

| Startup time | QoE | how fast playback begins | slow starts at certain times |

| Rebuffering rate | QoE | how often playback pauses | frequent short buffering |

| Bitrate stability | QoE | consistent picture quality | constant up/down quality shifts |

| Error frequency | QoE | session stability | restarts, drops, repeated failures |

| Latency | QoS | switching responsiveness | slow switching, sluggish control |

| Jitter | QoS | buffer stability | “random” buffering spikes |

| Packet loss | QoS | stream integrity | sudden stalls or quality collapse |

This table is useful because it helps you diagnose with calm confidence:

- If jitter and loss are unstable, all devices suffer eventually (TV, mobile, desktop).

- If only one device fails while others are stable, the issue is likely endpoint-specific (device health, app stability, background load).

Trust signals that separate a reliable system from a risky one

To keep MX IPTV in a high-trust lane (and avoid low-trust clustering), it helps to evaluate systems the way professionals do: by looking for operational signals rather than promises.

Quick trust checklist (2026)

- Monitoring mindset: problems can be detected and explained (not guessed)

- Redundancy language: the system degrades gracefully instead of collapsing

- Support clarity: device vs network vs service-layer isolation is possible

- Transparency: changes and incidents can be described clearly

- Multi-device consistency: similar behavior across endpoints over time

You can use this checklist to evaluate any provider or plan without needing risky comparisons or content claims.

Monitoring, Uptime, Redundancy: How MX IPTV Becomes Reliable in 2026

A page like MX IPTV stops being “generic” and becomes genuinely useful when it explains why reliability happens. In 2026, stable IPTV delivery is usually the outcome of three operational pillars:

- Monitoring (you can detect and explain issues)

- Uptime discipline (you design for predictable performance)

- Redundancy (you avoid single points of failure and degrade gracefully)

This section stays DMCA-safe and focuses on IPTV as a delivery system—the same way professional services describe reliability.

Monitoring: what “trusted operations” looks like (without buzzwords)

Monitoring is not about complicated dashboards. It’s about being able to answer simple questions consistently:

- When did the issue start?

- Who is affected (one device or many)?

- Which layer is failing (device, local network, service operations)?

- What changed (peak hour load, Wi-Fi congestion, endpoint update)?

If those questions can’t be answered, the system becomes guesswork—and guesswork is what creates long “crawled but not indexed” content in the first place: it lacks original usefulness. A monitoring mindset creates verifiable value.

What to monitor (a practical 2026 model)

| Layer | What you watch | Why it matters | What it signals |

|---|---|---|---|

| Endpoint (TV/Android TV/mobile/browser) | error frequency, crashes, session drops | reveals fragility | endpoint-specific instability |

| Home/office network | jitter, packet loss, peak-hour congestion | predicts buffering | stability issues, not speed issues |

| Delivery behavior | startup time, rebuffer events, bitrate stability | reflects real user experience | QoE degradation |

| Support process | reproducibility, time windows, clear questions | reduces friction | operational maturity |

| Changes over time | updates, new devices, layout changes | explains “new problems” | regression patterns |

Quote (support maturity): “If you can’t reproduce it, you can’t resolve it.”

Uptime in 2026: not a number — an operational outcome

Many pages talk about “uptime” like it’s a marketing badge. In reality, uptime is the result of system choices that reduce chaos during busy hours:

- Capacity planning: enough headroom so peak use doesn’t collapse quality

- Predictable update behavior: changes don’t break daily viewing

- Operational response: issues are detected and corrected quickly

- Consistency across endpoints: one weak device doesn’t define the whole experience

What “good uptime behavior” feels like to users

- Playback starts quickly most of the time, not only in ideal conditions

- Buffering is rare and short, not frequent and unpredictable

- Device switching and multi-device use remains stable at peak hours

- When problems happen, they are explainable and recoverable

This is the “enterprise mindset” applied to home and small-business contexts: stable services acknowledge real-world variability and manage it.

Redundancy: the difference between “fails hard” and “recovers smoothly”

Redundancy is the calm, professional way to describe reliability. It doesn’t need specific implementation details to be meaningful. For users, redundancy shows up as:

- fewer total failures during peak time

- fewer long interruptions

- smoother recovery when the network path becomes unstable

- less “all devices fail at once” behavior

Redundancy explained simply

A reliable delivery system is designed so that if one path degrades, the system can:

- route around the problem, or

- reduce quality gracefully instead of stopping completely, or

- recover without forcing constant restarts

This language is safe because it focuses on delivery resilience, not content.

The incident workflow: detect → isolate → resolve → prevent

When you want MX IPTV to be stable, you should evaluate systems by whether they can follow a predictable incident process. This is also how businesses and hospitality environments think: repeatable workflows beat hopeful fixes.

Incident workflow (simple and effective)

- Detect: identify the time window and affected devices

- Isolate: determine whether it’s device, network, or service operations

- Resolve: make the smallest change that improves all endpoints

- Prevent: record what happened so it doesn’t repeat

Even at home, this reduces time wasted and improves outcomes.

Diagnostic table: symptom → likely layer → safe next step

This table is designed to help users and support teams speak the same language. It avoids “how-to bypass” and stays focused on clean diagnostics.

| Symptom | Most likely layer | What it usually means | Safe next step |

|---|---|---|---|

| Buffering increases at night | local network / peak congestion | Wi-Fi congestion or shared load | compare peak vs off-peak; note time windows |

| Only one device drops | endpoint/device | endpoint instability or app behavior | test on a second device type for comparison |

| Slow startup but stable playback | delivery behavior / routing | latency spikes or path variability | record startup times at different hours |

| Audio out of sync | endpoint | endpoint decoding drift | test alternate endpoint; document pattern |

| Quality constantly changes | network stability | fluctuating throughput/jitter | compare wired vs Wi-Fi if possible |

| Multiple devices fail together | network-wide or operations | shared failure point | capture affected devices + time window for support |

How to use it:

- If one endpoint fails, suspect endpoint health first.

- If multiple endpoints fail together, suspect network-wide conditions or service-side patterns.

This keeps you calm and efficient.

Multi-device stability: why “baseline devices” matter

One of the most professional ways to evaluate MX IPTV is to always keep a “baseline device” that represents stable conditions. Many power users use a desktop or a controlled endpoint for this. The baseline device answers one key question:

Is the problem universal, or is it specific to one endpoint/network condition?

That single step prevents unnecessary changes, reduces support cycles, and improves outcomes across all screens.

Mini case study (home + multi-device): fix the layer that helps everyone

A household uses:

- Android TV endpoint in the main room (Wi-Fi)

- mobile devices on Wi-Fi

- a computer on stable connectivity (baseline)

They notice:

- mid-day playback is stable

- evening playback degrades on Wi-Fi devices

- baseline device remains stable

Interpretation: The weakest layer is the local Wi-Fi environment under load, not the entire delivery chain.

Result: Instead of changing everything, they focus on improving the shared layer that affects all endpoints (local stability), which reduces buffering across the household.

Quote (practical reliability): “Fix the layer that improves every device first.”

Multi-Device Environments in 2026: Home, Business, Hospitality (and Why This Prevents “Thin” Pages)

A big reason “MX IPTV” pages end up looking generic is that they talk only in broad claims. A higher-trust approach in 2026 is to explain how IPTV behaves in multi-device environments—because that’s what users actually experience. Reliability problems rarely come from a single screen; they emerge when devices, networks, and usage patterns collide.

This section turns MX IPTV into something more “index-worthy” by adding original frameworks, real use cases, and decision tools (tables + scorecards + mini case studies). Everything remains DMCA-safe and focused on IPTV as a delivery system.

Why multi-device design matters for MX IPTV (2026)

In 2026, most households and many small businesses run multiple streams across:

- a main TV endpoint (often Android TV)

- a secondary TV or Smart TV

- one or more mobile devices

- a browser or computer used for verification and control

When that environment is not designed intentionally, you see familiar symptoms:

- buffering appears only at night (peak hours)

- one device fails first, then others

- quality shifts constantly (“pumping”)

- support becomes frustrating because the problem isn’t described consistently

A multi-device mindset fixes this by introducing two simple ideas:

- Baseline endpoint: a controlled device you trust for comparison

- Consistency testing: check whether issues are device-specific or system-wide

Quote (multi-device truth): “Most ‘service problems’ are really environment problems.”

The 2026 Multi-Device Matrix (home vs business vs hospitality)

This matrix helps users quickly match their environment to a stable pattern. It also signals high usefulness to Google because it is not generic text—it’s a structured decision tool.

Multi-device environment matrix

| Environment | Primary goal | Typical device mix | Biggest risk | Best stabilizing principle |

|---|---|---|---|---|

| Home (single TV) | “Just works” | 1 TV endpoint + mobile | Wi-Fi variability | keep endpoint consistent |

| Home (power users) | stable multi-room | TV endpoint + mobile + browser/computer baseline | peak-hour congestion | baseline comparisons + reduce Wi-Fi load |

| Small business | predictability | controlled endpoint + documented workflow | update surprises | controlled updates + repeatable setup |

| Hospitality | standardization at scale | simplified endpoints + inventory tracking | issues multiply quickly | device consistency + operational process |

Key takeaway: As you scale from one screen to many, stability depends more on standardization than on “extra features.”

Home Power Users (2026): make reliability repeatable, not lucky

Power users usually want:

- consistent performance across rooms

- fast startup and switching

- fewer “random” issues

- a setup that remains stable during peak hours

What breaks power-user stability most often

- Wi-Fi contention (many devices share the same airspace)

- interference (dense apartments, overlapping networks)

- background updates and downloads

- endpoint differences (one device handles buffering better than another)

A professional home pattern (simple, effective)

- Use a dedicated TV endpoint for daily viewing (Android TV is common)

- Keep a mobile device as a fast Wi-Fi comparison tool

- Use a browser/computer baseline to test if the problem is universal or endpoint-specific

- Record time windows when issues appear (peak vs off-peak)

This “pattern” is powerful because it avoids constant switching and guessing.

Mini case study (home power user)

A household uses one TV endpoint and multiple mobile devices. Quality is stable in the morning but buffers at night. They compare:

- TV endpoint on Wi-Fi (night = unstable)

- mobile on Wi-Fi (night = unstable)

- baseline device on stable connection (night = mostly stable)

Interpretation: the shared weak layer is the local Wi-Fi environment under load, not the overall system.

Outcome: stability improves once they prioritize the main endpoint’s connection quality and reduce Wi-Fi load during peak hours.

Businesses (2026): predictable operations beat “features”

A business environment—waiting room, reception, meeting space—has a different standard:

- predictability is more important than customization

- repeatable setup is more important than experimentation

- support readiness is more important than “new options”

Business-grade principles that keep playback stable

- documented device setup (same steps every time)

- controlled change management (updates happen on a schedule)

- baseline behavior captured (what “good” looks like)

- clear incident notes (time, device, pattern)

This doesn’t require complicated tooling. It requires discipline.

Mini case study (small business)

A reception screen must remain stable all day. The office finds that issues correlate with busy Wi-Fi periods. Instead of replacing endpoints repeatedly, they standardize the setup and collect:

- time windows of degradation

- which endpoints were affected

- whether the behavior was repeatable

Outcome: support becomes faster because the problem is described in a structured way, and stability improves because the environment is treated like a small system.

Hospitality (2026): standardization, inventory, and “no surprises”

Hospitality is where small problems become big problems. The same issue across multiple rooms turns into a poor guest experience and heavy support load. The core principles are:

- standardize endpoints (same device model and settings profile)

- track inventory (which device is where)

- control updates (roll out changes intentionally)

- reduce variability (avoid mixed endpoints across rooms)

In hospitality, the “best” solution is the one that produces the same behavior everywhere—not the one with the most options.

The MX IPTV Reliability Scorecard (2026) — a practical decision tool

This scorecard turns “trust” into a measurable checklist. It can be used for home, business, and hospitality. It also gives your page unique value.

Reliability scorecard (0–2 per line, total /20)

| Category | What to look for | Score (0–2) |

|---|---|---|

| Peak-hour stability | remains usable when networks are busy | |

| Multi-device consistency | similar behavior across endpoints | |

| Error visibility | failures are explainable, not mysterious | |

| Recovery behavior | sessions recover without repeated restarts | |

| Support clarity | device/network/ops isolation is possible | |

| Change predictability | updates don’t create new surprises | |

| Network resilience | tolerant of mild variability | |

| Operational maturity | monitoring mindset and transparency | |

| Standardization readiness | easy to reproduce setup | |

| Scalability | stability remains as devices increase |

Interpretation:

- 16–20 = strong reliability signals

- 11–15 = acceptable but needs environment discipline

- ≤10 = unstable setup risk (usually due to variability and weak support)

Quote (calm decision rule): “Choose repeatability over excitement.”

If MX IPTV is going to be reliable in 2026, the best approach is simple: evaluate like a professional service, not like a one-time purchase. That means you measure performance across time windows (especially evenings), verify behavior across multiple devices, and choose a plan length based on how long you need to confirm stability. This section completes the page with practical tools that increase usefulness: diagnostic tables, an evaluation-based plans table, pillar navigation, legal clarity, and a schema-ready FAQ.

Troubleshooting framework (DMCA-safe): device → network → operations

When something fails, most people change too many things at once. A calmer approach is to isolate one layer at a time:

- Device layer — endpoint stability and session behavior

- Local network layer — jitter, packet loss, Wi-Fi congestion patterns

- Operations layer — wider performance patterns and support diagnostics

This layered model is safe because it focuses on reliability diagnostics, not bypass methods.

Quote (fast resolution rule): “Don’t chase every symptom. Identify the layer first.”

Diagnostic table (expanded): Symptom → likely layer → safe next step (2026)

| Symptom (what you notice) | Most likely layer | What it usually indicates | Safe next step |

|---|---|---|---|

| Buffering increases mainly at night | Local network / peak congestion | Wi-Fi congestion or shared load effects | compare peak vs off-peak; note times and affected devices |

| Only one device fails repeatedly | Device layer | endpoint instability or device-specific limitations | test on a second device type; document repeatability |

| Startup becomes slow but playback stays stable | Delivery behavior / routing | latency variability or path changes | record startup times across 3–5 sessions |

| Quality keeps shifting up and down | Network stability | fluctuating throughput or jitter | compare wired vs Wi-Fi if possible; note distance/interference |

| Audio drifts out of sync over time | Device layer | endpoint decoding drift under load | test a different endpoint; note session length before drift |

| Playback drops and needs frequent restarts | Device or operations | session fragility or error spikes | capture time window + device type + frequency |

| Several devices fail at the same time | Network-wide or operations | shared failure point | capture list of affected devices and exact time window |

| Works on mobile but not on TV endpoint | Endpoint difference | TV endpoint sensitivity or Wi-Fi placement | test on another endpoint; compare connection types |

| Works off-peak, fails during busy hours | Capacity stress / local congestion | environment load sensitivity | keep a baseline device test; record peak behavior consistently |

What to collect before contacting support (fast, useful)

- time window of the issue (e.g., 20:30–22:00)

- which devices were affected (TV endpoint, mobile, browser)

- whether it is repeatable (every night, random, only weekends)

- whether the issue is endpoint-specific or system-wide

This makes support faster because it avoids vague descriptions and anchors the problem in observable patterns.

Plans as evaluation (calm, professional, 2026)

Instead of choosing a plan by price alone, choose a plan length based on how long you need to confirm:

- peak-hour stability

- multi-device consistency

- support responsiveness and clarity

- “no surprises” behavior over time

This evaluation mindset works for individuals and scales to business/hospitality environments.

Plans table (1 / 3 / 6 / 12 / 24 months) — evaluation-first

| Plan length | Best for | What you can verify in that time | Decision checkpoint |

|---|---|---|---|

| 1 month | Baseline test | startup speed, buffering frequency, basic support response | keep only if peak hours are acceptable |

| 3 months | Real-world proof | multi-device behavior across routines | continue if issues are explainable and repeatable fixes exist |

| 6 months | Stable routine | fewer surprises across updates and usage cycles | continue if reliability remains consistent across weeks |

| 12 months | Long-term confidence | predictable operations and sustained quality | continue if support and stability remain mature and consistent |

| 24 months | Scale and standardize | multi-room / long-term operations goals | choose only after long stability is proven |

Internal link (plans and pricing, evaluation path):

https://worldiptv.store/world-iptv-plans/

Scalability: how MX IPTV grows from one screen to many

Scalability means the setup remains stable when you add:

- devices (more TVs, more mobiles)

- users (family members, customers, guests)

- time (updates, seasonal congestion, routine changes)

A scalable setup usually has:

- a stable primary endpoint (often an Android TV endpoint)

- a baseline test endpoint (browser/computer)

- consistent behavior across peak hours

- a documented approach to issues (layer isolation + time windows)

Pillar pages and navigation (links only)

https://worldiptv.store/

https://worldiptv.store/worldwide-iptv-blogs/

https://worldiptv.store/world-iptv-plans/

External context (links only)

https://www.grandviewresearch.com/industry-analysis/multi-protocol-labelled-switching-internet-protocol-virtual-private-network-market

https://www.oecd.org/en/publications/developments-in-cable-broadband-networks_5kmh7b0s68g5-en.html

https://www.fortunebusinessinsights.com/pay-tv-market-111551

Legal Clarity (mandatory)

- IPTV is a delivery technology; legality depends on licensing and jurisdiction.

- This article does not provide bypass methods or instructions intended to enable infringement.

- Users are responsible for ensuring the services and content they access are properly authorized.

Deep FAQ

1) What is MX IPTV in 2026 (in practical terms)?

A delivery setup focused on stable playback across devices, built around endpoint choice, network stability, and operational reliability.

2) Why do MX IPTV pages often look “generic” online?

Many reuse the same structure without adding measurable reliability frameworks. This guide uses metrics, tables, and operational thinking to stay useful and distinct.

3) What’s the fastest way to improve stability?

Identify whether the issue is device-specific or system-wide, then fix the layer that benefits all devices first.

4) Why does buffering happen even on fast internet?

Because stability (jitter/packet loss) matters as much as speed. Unstable delivery causes pauses even with high bandwidth.

5) Which matters more: the device or the network?

Both matter, but unstable networks tend to affect all endpoints. One weak endpoint can also create isolated problems.

6) What does “monitoring” mean for normal users?

It means you can describe issues clearly (time window, devices affected, repeatability) so problems can be diagnosed and resolved logically.

7) What are the most important experience metrics?

Startup time, buffering frequency, bitrate stability, and session error frequency.

8) How do I know if it’s a Wi-Fi issue?

If problems spike during peak hours or only on Wi-Fi devices, congestion or interference is likely.

9) Why does one device fail while others work?

Endpoints behave differently under load. If only one device fails repeatedly, the issue is often endpoint-specific.

10) What makes a setup “scalable” for a household?

Consistent endpoint strategy, stable network behavior, and a repeatable diagnostic approach.

11) What changes in a business environment?

Predictability and repeatability matter most: controlled updates, documented setup, and clear support expectations.

12) What changes in hospitality?

Standardization and inventory matter: consistent endpoints, controlled rollouts, and fast isolation of issues.

Closing summary (2026)

MX IPTV works best in 2026 when you treat it like a reliability system: choose stable endpoints, measure peak-hour behavior, and isolate problems by layer instead of guessing. The diagnostic tables and scorecards above help you turn “it buffers sometimes” into a clear pattern that can be evaluated and improved. If you want a calm evaluation path, use plan length as a testing window and scale only after stability is repeatable. That’s the most dependable way to build a stable MX IPTV experience—without risky claims, and with a professional, trust-first mindset.